ARRC: Advanced Reasoning Robot Control

Ammar Jaleel Mahmood, Eugene Vorobiov, Salim Rezvani, and Robin Chhabra

Toronto Metropolitan University

arXiv | Appendix | Video

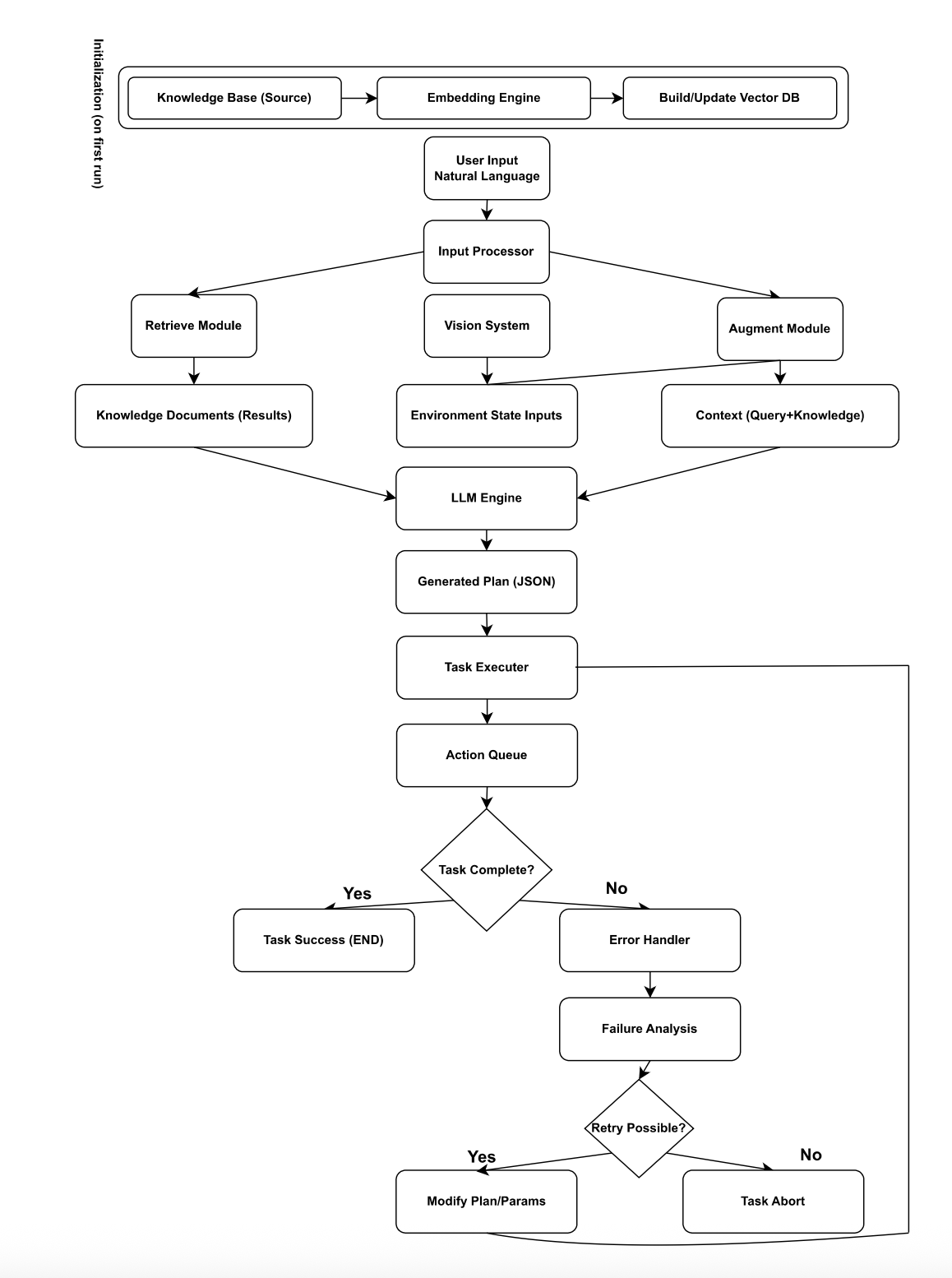

We present ARRC (advanced reasoning robot control), a practical system that connects natural language instructions to safe, local robotic control by combining Retrieval-Augmented Generation (RAG) with RGB–D perception and guarded execution on an affordable robot arm. The system indexes curated robot knowledge (movement patterns, task templates, and safety heuristics) in a vector database, retrieves task-relevant context for each instruction, and conditions a large language model (LLM) to synthesize JSON-structured action plans. These plans are executed on a UFactory xArm 850 fitted with a Dynamixel-driven parallel gripper and an Intel RealSense D435 camera. Perception uses AprilTags detections fused with depth to produce object-centric metric poses; execution is enforced via a set of software safety gates (workspace bounds, speed/force caps, timeouts, and bounded retries). We describe the architecture, knowledge design, integration choices, and a reproducible evaluation protocol for tabletop scan/approach/pick–place tasks. Experimental results are reported to demonstrate efficacy of the proposed approach. Our design shows that RAG-based planning can substantially improve plan validity and adaptability while keeping perception and low-level control local to the robot.

ARRC Tasks

We evaluate ARRC in a tabletop manipulation setting with common items that are easy to identify. Scenarios include scanning with fallback arcs, approach and grasp with guarded Z retreat, and structured place on a pose target. The setup is designed to exercise retrieval prompts, plan synthesis, and safety gates.

Method Overview

ARRC has three components: 1) Dialog and retrieval: user goals and environment notes condition retrieval over a curated knowledge base, then an LLM drafts a sub task plan with task space waypoints. 2) Plan validation: environment checks confirm reachability and collisions, and invalid steps trigger a short in context repair. 3) Execution: a motion layer translates poses to xArm waypoints with guarded speeds and force limits.

Technical Summary Video

Example Episodes

Real World Experiments

Successful episode

Recovery after low confidence detection

ARRC Details

Hardware and software

Hardware and software

- UFactory xArm 850 with Dynamixel driven gripper

- Intel RealSense D435 RGB D camera

- Ubuntu 22.04, Python 3.10, optional ROS 2

- Sentence Transformers embeddings with ChromaDB or FAISS

Execution and safety

Execution and safety

- Workspace limits and velocity caps at runtime

- Guarded Z approach and retreat sequences

- Timeouts with bounded retries and safe stop

- Pose transforms from camera to robot base

BibTeX

@misc{arrc2025,

title={ARRC: Advanced Reasoning Robot Control—Knowledge-Driven Autonomous Manipulation Using Retrieval-Augmented Generation},

author={Ammar Jaleel Mahmood and Eugene Vorobiov and Salim Rezvani and Robin Chhabra},

year={2025},

eprint={2510.05547},

archivePrefix={arXiv},

primaryClass={cs.RO}

}

Contact

Please reach out to ammar.j.mahmood@torontomu.ca or robin.chhabra@torontomu.ca